Shubham Saboo  @Saboo_Shubham_

@Saboo_Shubham_

Sep 23, 2022

8 tweets

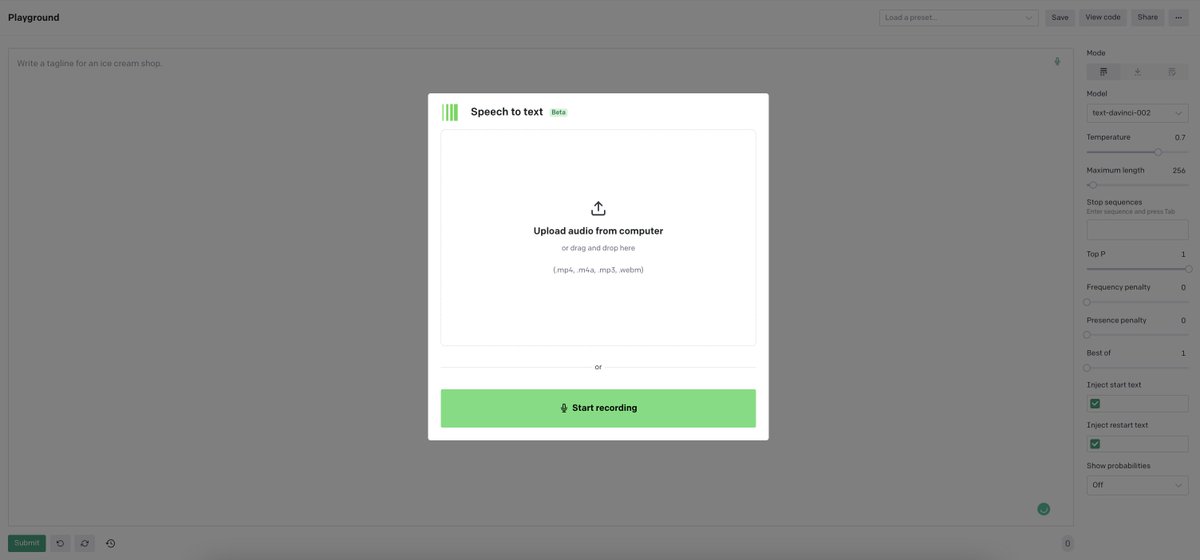

3 reasons to be excited about OpenAI's new speech-to-text model.

It's unlike any audio transcription model that we've ever seen.

(A thread)

1. Best-in-class English transcriptions!

It can achieve human-level robustness and accuracy on English speech recognition.

Being trained on 680k hours of multilingual data collected from the web, it's robust to accents, background noise, and technical language.

2. Multilingual transcriptions

The new model is capable of transcribing text in multiple languages, as well as translating from those languages into English.

So you can do:

- English transcription

- Any language to English transcription

- Non-English transcription

3. OpenSource

OpenAI made the audio transcription models and the inference code OpenSource, which will serve as a foundation for building useful applications and further research on robust speech processing.

The model "Whisper" is available in five different variants:

- tiny (39 M)

- base (74 M)

- small (244 M)

- medium (769 M)

- large (1550 M)

Check out the model card here: github.com/openai/whisper

You can read the research paper on "Robust Speech Recognition via Large-Scale Weak Supervision" to understand how the model works: cdn.openai.com/papers/whisper

Finally, combine "Whisper" which can understand any kind of audio like humans with "GPT-3" which can generate human-like text to build innovative products.

To understand the bigger picture, check out my GPT-3 book by @O'Reilly Media!

That's a wrap!

Stay tuned for the follow-up content on combining GPT-3 with other ML models to build innovative AI products.

If you liked this thread, consider following me @Shubham Saboo 🦒

Shubham Saboo

@Saboo_Shubham_

Senior AI Evangelist @JinaAI_ | Author @OReillyMedia "GPT-3: Building Innovative NLP Products using LLMs" | Co-Founder @KairosDataLabs | #gpt3 #nlp #ml

Missing some tweets in this thread? Or failed to load images or videos? You can try to .